The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

by Nate Oh on July 3, 2018 10:15 AM ESTHPE DLBS TensorRT: ResNet50 and ImageNet

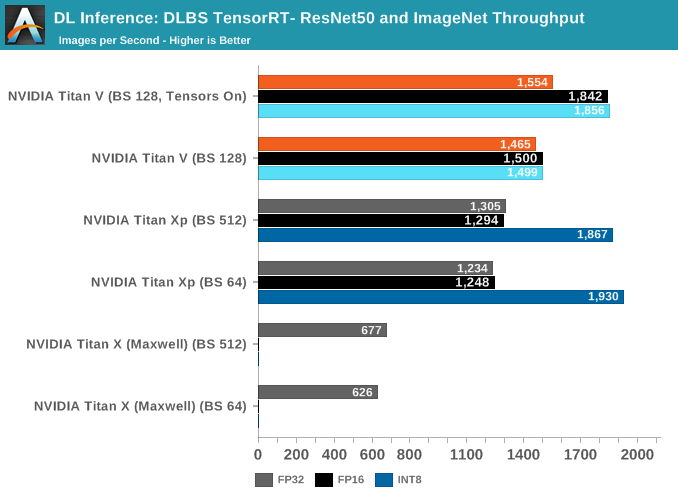

The other unique aspect of HPE DLBS is the feature of a benchmark for TensorRT, NVIDIA's inference optimizing engine. In recent years, NVIDIA has pushed to integrate it with new DL features like INT8/DP4A and tensor core 16-bit accumulator mode for inferencing.

Using a Caffe model, TensorRT adjusts the model as needed for inferencing at a given precision.

In total, we ran batch sizes 64, 512, and 1024 for Titan X (Maxwell) and Titan Xp, and batch sizes 128, 256, and 640 for Titan V; the results were within 1 - 5% of the other batch sizes, so we've not included them in the graph.

The high INT8 performance of Titan Xp somewhat corroborates with the GEMM/convolution performance; both workloads seem to be utilizing DP4A. Meanwhile, it's not clear how Titan V implements DP4A. all we know is that it is supported by the Volta instruction set. And Volta does has those separate INT32 units.

65 Comments

View All Comments

SirCanealot - Tuesday, July 3, 2018 - link

No overclocking benchmarks. WAT. ¬_¬ (/s)Thanks for the awesome, interesting write up as usual!

Chaitanya - Tuesday, July 3, 2018 - link

This is more of an enterprise product for consumers so even if overclocking it enabled its something that targeted demographic is not going to use.Samus - Tuesday, July 3, 2018 - link

woooooooshMrSpadge - Tuesday, July 3, 2018 - link

He even put the "end sarcasm" tag (/s) to point out this was a joke.Ticotoo - Tuesday, July 3, 2018 - link

Where oh where are the MacOS drivers? It took 6 months to get the pascal Titan drivers.Hopefully soon

cwolf78 - Tuesday, July 3, 2018 - link

Nobody cares? I wouldn't be surprised if support gets dropped at some point. MacOS isn't exactly going anywhere.eek2121 - Tuesday, July 3, 2018 - link

Quite a few developers and professionals use Macs. Also college students. By manufacturer market share Apple probably has the biggest share, if not then definitely in the top 5.mode_13h - Tuesday, July 3, 2018 - link

I doubt it. Linux rules the cloud, and that's where all the real horsepower is at. Lately, anyone serious about deep learning is using Nvidia on Linux. It's only 2nd-teir players, like AMD and Intel, who really stand anything to gain by supporting niche platforms like Macs and maybe even Windows/Azure.Once upon a time, Apple actually made a rackmount OS X server. I think that line has long since died off.

Freakie - Wednesday, July 4, 2018 - link

Lol, those developers and professionals use their Macs to remote in to their compute servers, not to do any of the number crunching with.The idea of using a personal computer for anything except writing and debugging code is next to unheard of in an environment that requires the kind of power that these GPUs are meant to output. The machine they use for the actual computations are 99.5% of the time, a dedicated server used for nothing but to complete heavy compute tasks, usually with no graphical interface, just straight command-line.

philehidiot - Wednesday, July 4, 2018 - link

If it's just a command line why bother with a GPU like this? Surely integrated graphics would do?(Even though this is a joke, I'm not sure I can bear the humiliation of pressing "submit")